let’s say you are on a bleeding edge distribution, or have access to bleeding edge GTK+.

let’s say you want to use OpenGL inside your GTK+ application.

let’s say you don’t know where to start, except for the API reference of the GtkGLArea widget, and all you can find are examples and tutorials on how to use OpenGL with GLUT, or SDL, or worse some random toolkit that nobody heard about.

this clearly won’t do, so I decided to write down how to use OpenGL with GTK+ using the newly added API available with GTK+ 3.16.

disclaimer: I am not going to write an OpenGL tutorial; there are other resources for it, especially for modern API, like Anton’s OpenGL 4 tutorials.

I’ll spend just a minimal amount of time explaining the details of some of the API when it intersects with GTK+’s own. this blog will also form the template for the documentation in the API reference.

Things to know before we start

the OpenGL support inside GTK+ requires core GL profiles, and thus it won’t

work with the fixed pipeline API that was common until OpenGL 3.2 and later

versions. this means that you won’t be able to use API like glRotatef(),

or glBegin()/glEnd() pairs, or any of that stuff.

the dependency on non-legacy profiles has various advantages, mostly in performance and requirements from a toolkit perspective; it adds minimum requirements on your application — even if modern OpenGL is supported by Mesa, MacOS, and Windows. before you ask: no, we won’t add legacy profiles support to GTK. it’s basically impossible to support both core and legacy profiles at the same time: you have to choose either one, which also means duplicating all the code paths. yes, some old hardware does not support OpenGL 3 and later, but there are software fallbacks in place. to be fair, everyone using GL will tell you to stop using legacy profiles, and get on with the times.

Using the GtkGLArea widget

let’s start with an example application that embeds a GtkGLArea widget and

uses it to render a simple triangle. I’ll be using the example

code that comes with the GTK+ API reference as a template,

so you can also look at that section of the documentation. the code is also

available in my GitHub repository.

we start by building the main application’s UI from a GtkBuilder template

file its corresponding GObject class, called GlareaAppWindow:

#ifndef __GLAREA_APP_WINDOW_H__

#define __GLAREA_APP_WINDOW_H__

#include <gtk/gtk.h>

#include "glarea-app.h"

G_BEGIN_DECLS

#define GLAREA_TYPE_APP_WINDOW (glarea_app_window_get_type ())

G_DECLARE_FINAL_TYPE (GlareaAppWindow, glarea_app_window, GLAREA, APP_WINDOW, GtkApplicationWindow)

GtkWidget *glarea_app_window_new (GlareaApp *app);

G_END_DECLS

#endif /* __GLAREA_APP_WINDOW_H__ */

this class is used by the GlareaApp class, which in turn holds the

application state:

#ifndef __GLAREA_APP_H__

#define __GLAREA_APP_H__

#include <gtk/gtk.h>

G_BEGIN_DECLS

#define GLAREA_ERROR (glarea_error_quark ())

typedef enum {

GLAREA_ERROR_SHADER_COMPILATION,

GLAREA_ERROR_SHADER_LINK

} GlareaError;

GQuark glarea_error_quark (void);

#define GLAREA_TYPE_APP (glarea_app_get_type ())

G_DECLARE_FINAL_TYPE (GlareaApp, glarea_app, GLAREA, APP, GtkApplication)

GtkApplication *glarea_app_new (void);

G_END_DECLS

#endif /* __GLAREA_APP_H__ */

the GtkBuilder template file contains a bunch of widgets, but the most

interesting section (at least for us) is this one:

<child>

<object class="GtkGLArea" id="gl_drawing_area">

<signal name="realize" handler="gl_init" object="GlareaAppWindow" swapped="yes"/>

<signal name="unrealize" handler="gl_fini" object="GlareaAppWindow" swapped="yes"/>

<signal name="render" handler="gl_draw" object="GlareaAppWindow" swapped="yes"/>

<property name="visible">True</property>

<property name="can_focus">False</property>

<property name="hexpand">True</property>

<property name="vexpand">True</property>

</object>

</child>

which contains the definition for adding a GtkGLArea and connecting to its signals.

once we connect all elements of the template to the GlareaAppWindow class:

static void

glarea_app_window_class_init (GlareaAppWindowClass *klass)

{

GtkWidgetClass *widget_class = GTK_WIDGET_CLASS (klass);

gtk_widget_class_set_template_from_resource (widget_class, "/io/bassi/glarea/glarea-app-window.ui");

gtk_widget_class_bind_template_child (widget_class, GlareaAppWindow, gl_drawing_area);

gtk_widget_class_bind_template_child (widget_class, GlareaAppWindow, x_adjustment);

gtk_widget_class_bind_template_child (widget_class, GlareaAppWindow, y_adjustment);

gtk_widget_class_bind_template_child (widget_class, GlareaAppWindow, z_adjustment);

gtk_widget_class_bind_template_callback (widget_class, adjustment_changed);

gtk_widget_class_bind_template_callback (widget_class, gl_init);

gtk_widget_class_bind_template_callback (widget_class, gl_draw);

gtk_widget_class_bind_template_callback (widget_class, gl_fini);

}

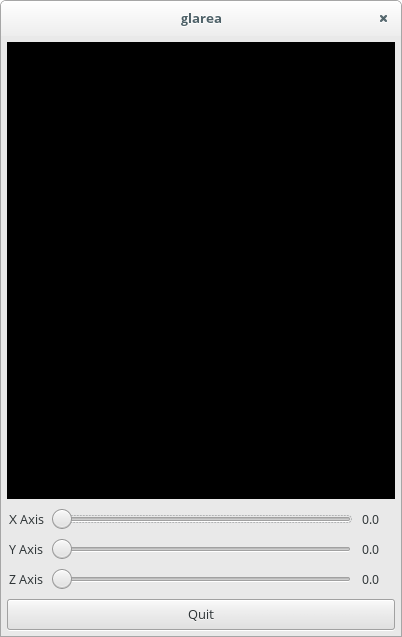

and we compile and run the whole thing, we should get something like this:

the empty GL drawing area

if there were any errors while initializing the GL context, you would see

them inside the GtkGLArea widget itself; you can control this behaviour,

just like you can control the creation of the GdkGLContext yourself.

as you saw in the code above, we use the GtkWidget signals to set up,

draw, and tear down the OpenGL state. in the old days of the fixed pipeline

API, we could have simply connected to the GtkGLArea::render signal,

called some OpenGL API, and have something appear on the screen. those days

are long gone. OpenGL requires more code to get going with the programmable

pipeline. while this means that you have access to a much leaner (and

powerful) API, some of the convenience went out of the window.

in order to get things going, we need to start by setting up the OpenGL

state; we use the GtkWidget::realize signal, as that allows our code to be

called after the GtkGLArea widget has created a GdkGLContext, so that we

can use it:

static void

gl_init (GlareaAppWindow *self)

{

/* we need to ensure that the GdkGLContext is set before calling GL API */

gtk_gl_area_make_current (GTK_GL_AREA (self->gl_drawing_area));

/* initialize the shaders and retrieve the program data */

GError *error = NULL;

if (!init_shaders (&self->program,

&self->mvp_location,

&self->position_index,

&self->color_index,

&error))

{

gtk_gl_area_set_error (GTK_GL_AREA (self->gl_drawing_area), error);

g_error_free (error);

return;

}

/* initialize the vertex buffers */

init_buffers (self->position_index, self->color_index, &self->vao);

}

in the same way, we use GtkWidget::unrealize to free the resources we

created inside the gl_init callback:

static void

gl_fini (GlareaAppWindow *self)

{

/* we need to ensure that the GdkGLContext is set before calling GL API */

gtk_gl_area_make_current (GTK_GL_AREA (self->gl_drawing_area));

/* destroy all the resources we created */

glDeleteVertexArrays (1, &self->vao);

glDeleteProgram (self->program);

}

at this point, the code to draw the context of the GtkGLArea is:

static gboolean

gl_draw (GlareaAppWindow *self)

{

/* clear the viewport; the viewport is automatically resized when

* the GtkGLArea gets an allocation

*/

glClearColor (0.5, 0.5, 0.5, 1.0);

glClear (GL_COLOR_BUFFER_BIT);

/* draw our object */

draw_triangle (self);

/* flush the contents of the pipeline */

glFlush ();

return FALSE;

}

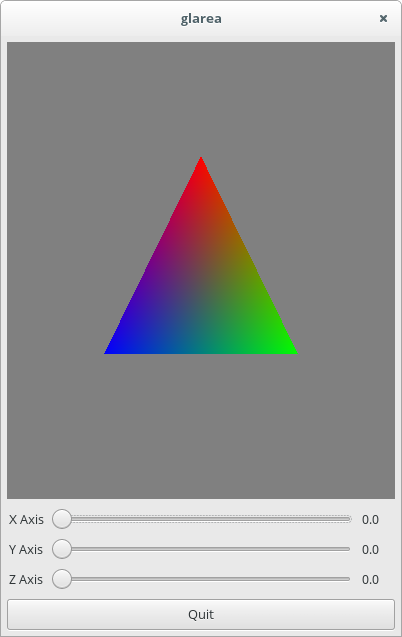

and voilà:

Houston, we have a triangle

obviously, it’s a bit more complicated that.

let’s start with the code that initializes the resources inside gl_init();

what do init_buffers() and init_shaders() do? the former creates the

vertex buffers on the graphics pipeline, and populates it with the

per-vertex data that we want to use later on, namely: the position of each

vertex, and its color:

/* the vertex data is constant */

struct vertex_info {

float position[3];

float color[3];

};

static const struct vertex_info vertex_data[] = {

{ { 0.0f, 0.500f, 0.0f }, { 1.f, 0.f, 0.f } },

{ { 0.5f, -0.366f, 0.0f }, { 0.f, 1.f, 0.f } },

{ { -0.5f, -0.366f, 0.0f }, { 0.f, 0.f, 1.f } },

};

it does that by creating two buffers:

- a Vertex Array Object, which holds all the subsequent vertex buffers

- a Vertex Buffer Object, which holds the vertex data

/* we need to create a VAO to store the other buffers */

glGenVertexArrays (1, &vao);

glBindVertexArray (vao);

/* this is the VBO that holds the vertex data */

glGenBuffers (1, &buffer);

glBindBuffer (GL_ARRAY_BUFFER, buffer);

glBufferData (GL_ARRAY_BUFFER, sizeof (vertex_data), vertex_data, GL_STATIC_DRAW);

/* enable and set the position attribute */

glEnableVertexAttribArray (position_index);

glVertexAttribPointer (position_index, 3, GL_FLOAT, GL_FALSE,

sizeof (struct vertex_info),

(GLvoid *) (G_STRUCT_OFFSET (struct vertex_info, position)));

/* enable and set the color attribute */

glEnableVertexAttribArray (color_index);

glVertexAttribPointer (color_index, 3, GL_FLOAT, GL_FALSE,

sizeof (struct vertex_info),

(GLvoid *) (G_STRUCT_OFFSET (struct vertex_info, color)));

the init_shaders() function is a bit more complex, as it needs to

- compile a vertex shader

- compile a fragment shader

- link both the vertex and the fragment shaders together into a program

- extract the location of the attributes and uniforms

the vertex shader is executed once for each vertex, and establishes the location of each vertex:

#version 150

in vec3 position;

in vec3 color;

uniform mat4 mvp;

smooth out vec4 vertexColor;

void main() {

gl_Position = mvp * vec4(position, 1.0);

vertexColor = vec4(color, 1.0);

}

it also has access to the vertex data that we stored inside the vertex buffer object, which we pass to the fragment shader:

#version 150

smooth in vec4 vertexColor;

out vec4 outputColor;

void main() {

outputColor = vertexColor;

}

the fragment shader is executed once for each fragment, or pixel-sized space between vertices.

once both the vertex buffers and the program are uploaded into the graphics pipeline, the GPU will render the result of the program operating over the vertex and fragment data — in our case, a triangle with colors interpolating between each vertex:

static void

draw_triangle (GlareaAppWindow *self)

{

if (self->program == 0 || self->vao == 0)

return;

/* load our program */

glUseProgram (self->program);

/* update the "mvp" matrix we use in the shader */

glUniformMatrix4fv (self->mvp_location, 1, GL_FALSE, &(self->mvp[0]));

/* use the buffers in the VAO */

glBindVertexArray (self->vao);

/* draw the three vertices as a triangle */

glDrawArrays (GL_TRIANGLES, 0, 3);

/* we finished using the buffers and program */

glBindVertexArray (0);

glUseProgram (0);

}

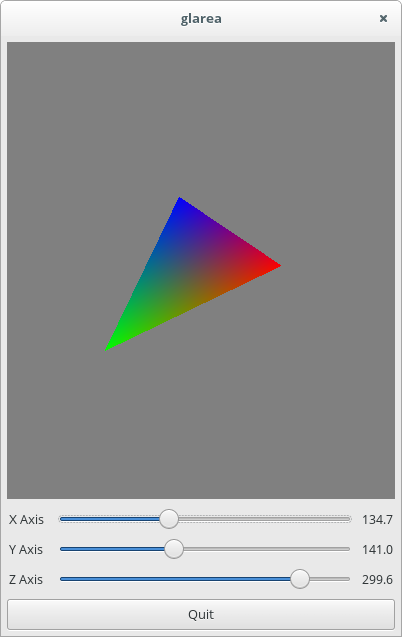

now that we have a static triangle, we should connect the UI controls and

transform it around each axis. in order to do that, we compute the

transformation matrix using the value from the three GtkScale widgets as

the rotation angle around each axis. first of all, we connect to the

GtkAdjustment::value-changed signal, update the rotation angles, and use

them to generate the rotation matrix:

static void

adjustment_changed (GlareaAppWindow *self,

GtkAdjustment *adj)

{

double value = gtk_adjustment_get_value (adj);

/* update the rotation angles */

if (adj == self->x_adjustment)

self->rotation_angles[X_AXIS] = value;

if (adj == self->y_adjustment)

self->rotation_angles[Y_AXIS] = value;

if (adj == self->z_adjustment)

self->rotation_angles[Z_AXIS] = value;

/* recompute the mvp matrix */

compute_mvp (self->mvp,

self->rotation_angles[X_AXIS],

self->rotation_angles[Y_AXIS],

self->rotation_angles[Z_AXIS]);

/* queue a redraw on the GtkGLArea */

gtk_widget_queue_draw (self->gl_drawing_area);

}

then we queue a redraw on the GtkGLArea widget, and that’s it; the

draw_triangle() code will take the matrix, place it inside the vertex

shader, and we’ll use it to transform the location of each vertex.

add a few more triangles and you get Quake

there is obviously a lot more that you can do, but this should cover the basics.

Porting from older libraries

back in the GTK+ 2.x days, there were two external libraries used to render OpenGL pipelines into GTK+ widgets:

- GtkGLExt

- GtkGLArea

the GDK drawing model was simpler, in those days, so these libraries just took a native windowing system surface, bound it to a GL context, and expected everything to work. it goes without saying that it is not the case any more when it comes to GTK+ 3, and with modern graphics architectures.

both libraries also had the unfortunate idea of abusing the GDK and GTK namespaces, which means that, if ported to integrate with GTK+ 3, they would collide with GTK’s own symbols. this means that these two libraries are forever tied to GTK+ 2.x, and as they are unmaintained already, you should not be using them to write new code.

Porting from GtkGLExt

GtkGLExt is the library GTK+ 2.x applications used to integrate OpenGL rendering inside GTK+ widgets. it is currently unmaintained, and there is no GTK+ 3.x port. not only GtkGLExt is targeting outdated API inside GTK+, it’s also fairly tied to the old OpenGL 2.1 API and rendering model. this means that if you are using it, you’re also using one legacy API on top of another legacy API.

if you were using GtkGLExt you will likely remove most of

the code dealing with initialization and the creation of the GL context. you

won’t be able to use any random widget with a GdkGLContext, but you’ll be

limited to using GtkGLArea. while there isn’t anything specific about the

GtkGLArea widget, GtkGLArea will handle context creation for you, as

well as creating the offscreen framebuffer and the various ancillary buffers

that you can use to render on.

Porting from GtkGLArea

GtkGLArea is another GTK+ 2.x library that was used to integrate OpenGL rendering with GTK+. it has seen a GTK+ 3.x port, as well as a namespace change that avoids the collision with the GTK namespace, but the internal implementation is also pretty much tied to OpenGL 2.1 API and rendering model.

unlike GtkGLExt, GtkGLArea only provides you with the API to create a GL context, a widget to render into. it is not tied to the GDK drawing model, so you’re essentially bypassing GDK’s internals, which means that changes inside GTK+ may break your existing code.

Resources

- Anton’s OpenGL 4 tutorials, by Anton Gerdelan

- Learning Modern 3D Graphics Programming, by Jason L. McKesson

- GtkGLArea API reference